The client is a leading global hospitality enterprise operating thousands of properties across more than 100 countries, with a large and geographically distributed workforce.

Employees relied on fragmented knowledge sources such as SharePoint, Nuxeo, OneDrive, and internal repositories, resulting in significant time spent locating case studies, RFPs, SOWs, and compliance documents. The client sought to modernise productivity through an enterprise-grade Generative AI assistant capable of secure, context-aware information retrieval.

The client required a scalable, secure AI assistant to unify siloed knowledge systems and enable conversational access across enterprise repositories.

The solution needed to —

- Enable role-based querying across multiple knowledge bases via a single conversational interface

- Support hybrid search with multi-index retrieval, query transformation, re-ranking, and context optimization

- Integrate with enterprise SSO and enforce data-level permissions

- Provide a cloud-native, multi-model architecture capable of supporting new LLM integrations and connector expansion

- Unify multi-source enterprise knowledge into a centralized, governed data platform

- Enable role-based access control (RBAC) for secure and compliant knowledge retrieval

- Improve visibility into query patterns, usage trends, and knowledge access behavior

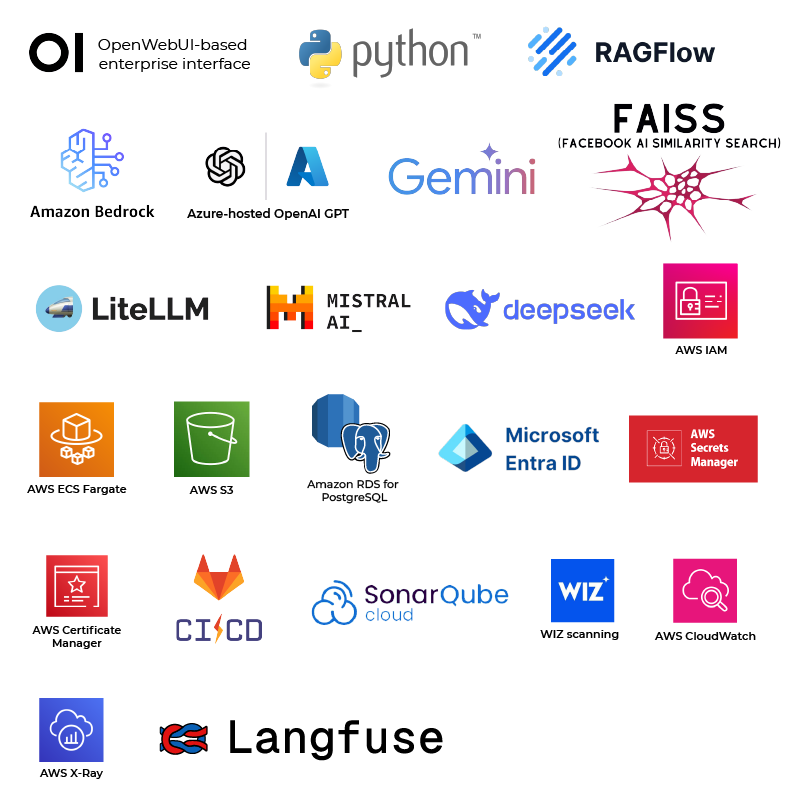

A four-layer Agentic AI platform was deployed on AWS featuring a SmartPal Agent Orchestrator with dynamic tool binding, configurable RAG middleware, and secure multi-model routing.

Key solution features included:

- Agent Orchestrator – Manages prompt handling, model abstraction with context caching, parallel tool execution, RBAC enforcement, and conversation memory. This ensured secure, role-based access to enterprise knowledge.

- Multi-Model LLM Layer – Supports OpenAI GPT, Gemini, LLaMA, Mistral, DeepSeek, and Amazon Bedrock via an abstraction layer enabling model-agnostic routing and future integrations.

- Advanced Retrieval Engine – Hybrid search combining analytics-driven keyword and vector retrieval across multiple knowledge bases with metadata filtering, re-ranking, and context optimisation.

- Enterprise RAG Middleware (ERM) – Abstraction layer managing document authorisation, tagging, metadata, agent execution, configuration controls, and collaborative features, supporting governed access across knowledge sources.

- Enterprise Data Ingestion – Automated pipelines via Apache Airflow ingest enterprise and Outlook data into FAISS-based indexes and PostgreSQL-backed compliance indexes, enabling multi-source data integration across repositories.

- Observability – Self-hosted LangFuse enables LLM trace capture, monitoring, and prompt evaluation, providing query analytics, usage insights, and supporting better decision-making.