Modern software engineering used to follow a predictable rhythm. Teams would gather requirements, design applications, develop features, test releases, and deploy updates in structured cycles. These models would work well when applications were largely deterministic, and product behavior was defined entirely by code.

AI is shifting software engineering from deterministic, code-defined behavior to applications whose behavior is shaped by data, models, and feedback loops.

Many modern applications now incorporate machine learning and generative models to classify, predict, recommend, and generate content. Because model outputs are probabilistic and can change as data and training evolve, teams must treat the model, data pipeline, and production monitoring as first-class engineering artifacts, not one-time implementation details.

This shift is accelerating the need for modernizing the software development life cycle, where engineering processes evolve to support intelligent, continuous learning products.

Engineering organizations must rethink not just what they build, but how they build it. This reevaluation extends to the very foundation of AI adoption strategies.

Rethinking AI Adoption: Moving Beyond Feature-Level Integration

Many organizations begin their AI journey by adding isolated capabilities to existing products such as recommendation engines, chatbots, predictive analytics, or automation tools. However, such feature-level adoption often leads to fragmented data pipelines and limited scalability.

Instead, AI must be embedded as a core design principle within product architecture.

Applications need to be designed to incorporate model outputs dynamically so that experiences and decisions can adapt in real time. Data environments must support continuous ingestion, contextual feedback, and evolving training pipelines. Additionally, infrastructure must enable rapid experimentation, deployment, and model monitoring throughout the product lifecycle.

This means modern engineering focuses more on AI-driven products that learn, adapt, and continuously improve.

The AI-Assisted Engineering Pipeline

As AI becomes integral to software development, engineering pipelines themselves are evolving.

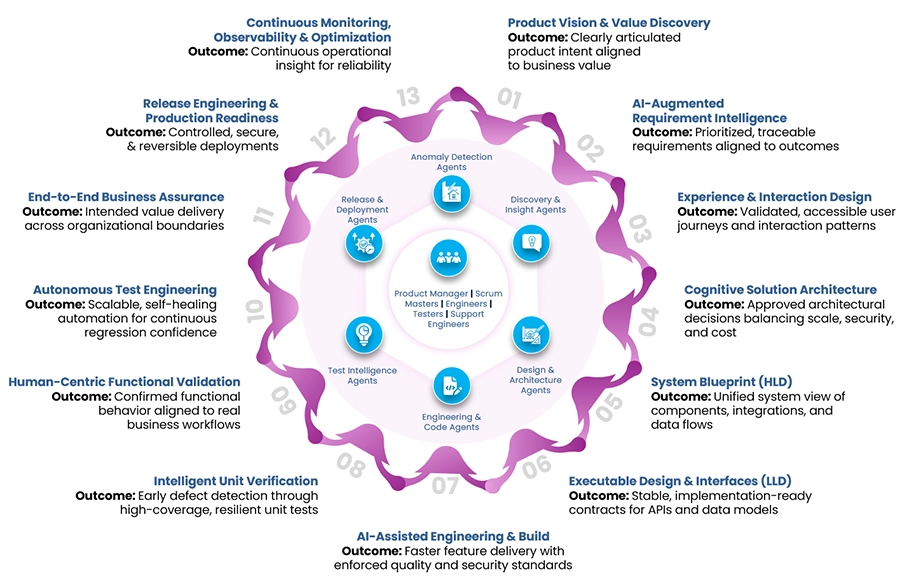

At Cybage, we view this transformation through an AI-augmented SDLC structured across 13 connected lifecycle phases from early product discovery to post-production observability. Rather than introducing AI at a single stage, intelligence is embedded throughout the engineering lifecycle to translate strategic intent into predictable delivery, measurable outcomes, and continuous improvement.

- Product Vision & Value Discovery: Analyzes market signals and customer insights to define product priorities and opportunities.

- AI-Augmented Requirement Intelligence: Generates structured user stories, clarifies scope, and strengthens traceability across requirements.

- Experience & Interaction Design: Enhances product design through faster wireframing, prototyping, and usability validation.

- Cognitive Solution Architecture: Recommends architectural patterns while evaluating trade-offs related to cost, scalability, and security.

- System Blueprint (HLD): Validates product components, integrations, and data flows to ensure architectural integrity.

- Executable Design & Interfaces (LLD): Reinforces API and data contracts and detects design inconsistencies early.

- AI-Assisted Engineering & Build: Speeds up development with AI-driven coding, refactoring, and secure implementation practices.

- Intelligent Unit Verification: Generates unit tests, improves coverage, and identifies edge cases early in the development cycle.

- Human-Centric Functional Validation: Uses AI to suggest risk-based scenarios while human testers validate workflows and business logic.

- Autonomous Test Engineering: Enables self-healing test suites and improves test reliability by reducing flakiness.

- End-to-End Business Assurance: Detects cross-application issues and safeguards critical business processes.

- Release Engineering & Production Readiness: Assesses release risks and strengthens CI/CD pipelines and deployment safeguards.

- Continuous Monitoring, Observability & Optimization: Enables early anomaly detection, faster root cause analysis, and fewer production incidents.

Together, these phases establish strong engineering pipelines / practices, intelligence-driven engineering products that support predictable delivery and continuous product improvement.

Building Product Engineering Applications Designed for AI

Realizing the full potential of AI will require more than adding new tools to existing workflows. It will demand engineering products and platforms designed from the ground up to support intelligence, adaptability, and continuous learning.

At Cybage, this perspective shapes how we deliver Digital Product Engineering Services for organizations building next-generation software platforms.

Future-ready enterprises will increasingly rely on scalable, cloud-native architectures capable of supporting data-intensive workloads and continuous model training and deployment. Data platforms will enable reliable ingestion, governance, and contextual enrichment so that AI-driven products consistently operate on high-quality, trusted data.

Equally important will be strong engineering governance. As AI becomes deeply embedded in core products and applications, organizations will need robust observability, monitoring, and explainability frameworks to ensure transparency, trust, and regulatory compliance.

Together, these capabilities will enable organizations to move beyond isolated AI experiments toward scalable, AI-driven engineering environments that continuously evolve, learn, and power long-term innovation.

Download the whitepaper to see how Cybage’s intelligent engineering pipeline extends beyond code delivery; connecting discovery, architecture, build, validation, release readiness, and production observability so leaders can operationalize governance and deliver software products with greater predictability and scale.