- The client is an independent statutory corporation responsible for operating and maintaining a provincial land title and survey system. It provides secure, legislated land registration, survey access, and parcel mapping services to professionals, government agencies, and the public.

- Its web-based Help Centre serves as the primary self-service channel for registration guidance, account setup, and search services.

Success Story

About the Client

Business Needs| Replacing a Legacy Intent-Based Chatbot

The existing chatbot was built on a rigid intent-based NLP architecture with limited coverage and high manual maintenance overhead.

The client required a next-generation Generative AI assistant to —

- Handle open-ended, natural language queries across full Help Centre content

- Expand knowledge coverage beyond limited predefined intents

- Reduce manual maintenance through managed RAG architecture

- Improve response speed and contextual accuracy while maintaining regional compliance

- Enable scalable knowledge ingestion, indexing, and discoverability across structured and unstructured Help Center data

- Provide visibility into user behavior, query patterns, and content effectiveness through analytics

Solutions| Managed RAG Architecture on Amazon Bedrock

A production-grade Generative AI chatbot was deployed using Amazon Bedrock and a fully managed retrieval pipeline. The solution was further developed into a Knowledge Data Platform for Support Analytics, enabling both intelligent retrieval and the generation of actionable insights.

- Managed Knowledge Base – Amazon Bedrock Knowledge Base integrated with Amazon OpenSearch Serverless replaced the evaluated self-hosted vector database. Amazon Titan Text Embeddings V2 enables semantic retrieval. Enhanced data ingestion and indexing pipelines support continuous synchronization of Help Center content, metadata enrichment, and improved semantic structuring for higher-quality retrieval.

- Foundation Model Selection – GPT-4o-mini, Claude 3 Haiku, and Claude 3 Sonnet were evaluated across latency, accuracy, cost, and Canada region availability. Claude 3 Haiku was selected for low latency, high context window (up to 200K tokens), and regional compliance.

- Relevance Optimisation – Cohere Rerank 3.5 refines retrieved results before response generation.

- Backend Architecture – Containerised AI-API deployed on Amazon EKS with health probes and ReplicaSets for self-healing and high availability. Secure Bedrock connectivity via AWS PrivateLink.

- Frontend & Storage – UI hosted on Amazon S3 with CloudFront and AWS WAF. Chat history stored in Amazon DynamoDB (24-hour TTL). Amazon RDS (PostgreSQL) supports trace storage and replication for DR. Improved data accessibility and searchability through optimized indexing strategies and unified access patterns across knowledge sources.

- Guardrails & Observability – NeMo Guardrails enforces topic-level allow/deny controls. LangFuse captures LLM traces. GlitchTip and Imperva provide monitoring and application security. A dedicated analytics layer aggregates query logs, interaction data, and retrieval performance metrics to enable reporting dashboards, usage trend analysis, and continuous optimization. Content intelligence capabilities identify knowledge gaps, trending topics, and areas for Help Center optimization.

- Data Governance – Role-based access control (RBAC) is enforced through AWS IAM, ensuring secure, controlled access to data and services. End-to-end encryption, PrivateLink connectivity, and compliance-aligned data handling ensure adherence to regional regulatory requirements.

Business Impact

- Full replacement of intent-based chatbot with conversational Generative AI assistant

- Improved response speed via low-latency Bedrock inference

- Enhanced contextual accuracy through managed RAG and reranking

- Increased self-service engagement across Help Centre users

- Reduced support workload through automated query resolution

- Established a scalable Knowledge Data Platform that transforms support interactions into actionable insights

- Enabled data-driven decision-making through user query analytics and content performance tracking

- Improved content strategy by identifying gaps, redundancies, and emerging user needs

- Reduced data silos by consolidating fragmented knowledge sources into a unified platform

- Accelerated insight generation through real-time query analytics and reporting

- Improved decision-making via data-driven visibility into user behavior and content effectiveness

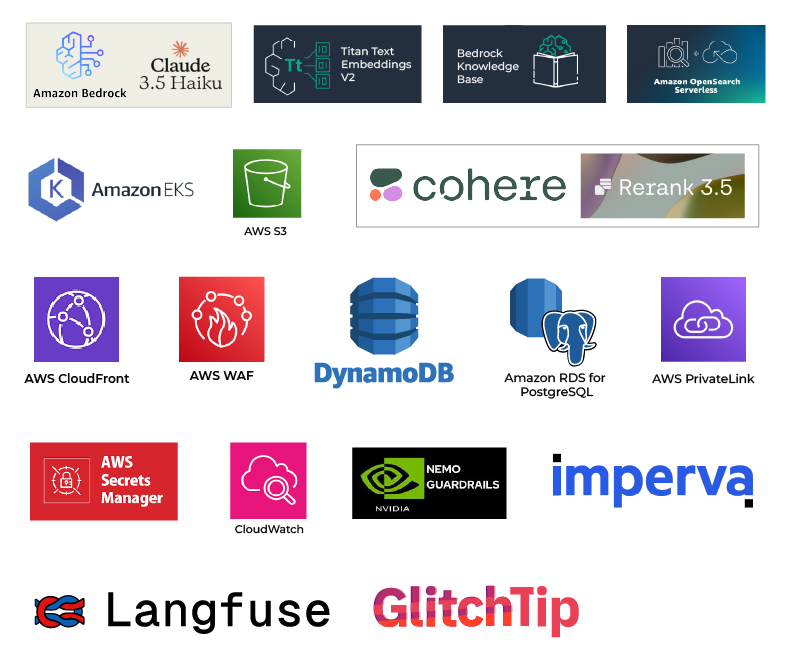

Technology Stack

Image

Related Success Stories

Success Stories

Posted On:

Expanded Market Reach with a Future-Ready, Scalable…

Explore how Cybage enabled a US-based market research firm to transition to a mobile-first, AWS-powered solution…

Success Stories

Posted On:

Accelerated Digital Transformation through Legacy System…

See how Cybage re-engineered a legacy legal management system to enhance performance, integration, and business…

Let's Get Moving!

Your purpose, our passion. Connect with us and let's make things happen.

Image